Why test diamond model makes sense?

Optimize software testing for faster but stable product delivery.

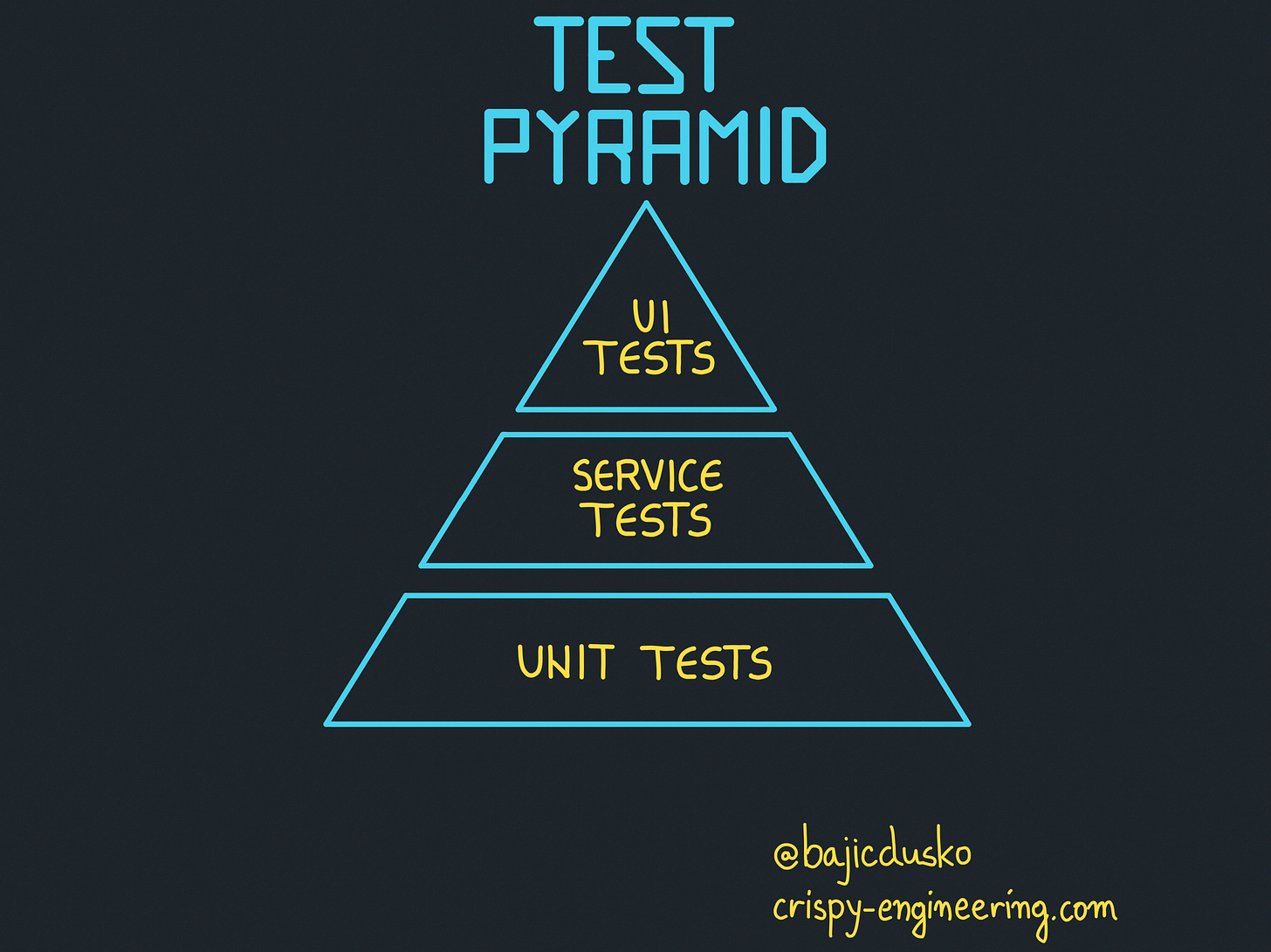

In his book "Succeeding Agile", Mike Cohn come up with the concept of the test pyramid. It's a visual metaphor presenting three layers of different testing procedures (or test types) that we write for our software product.

Martin Fowler extended this idea with “The Practical Test Pyramid”.

The current model wasn’t fit for the industry's needs anymore and by focusing on automation, he went with the test pyramid to the next level.

Here is an excerpt from this text:

Unfortunately the concept of the test pyramid falls a little short if you take a closer look. Some argue that either the naming or some conceptual aspects of Mike Cohn's test pyramid are not ideal, and I have to agree. From a modern point of view the test pyramid seems overly simplistic and can therefore be misleading.

Still, due to its simplicity the essence of the test pyramid serves as a good rule of thumb when it comes to establishing your own test suite.

Martin Fowler

The industry evolved again, and stakes have changed when it comes to testing software products.

Product size matters

I have worked on dozens of software projects in the past 13 years. They range from projects for global conglomerates in the car industry, to small startup apps.

Money poured into startups in the last decade is hard to imagine. Startups usually have one goal in the beginning. Ship fast, go to market as soon as possible, iterate fast, repeat. This results in half-baked MVPs reaching production.

It's fair to say that in the majority of cases, these products don't have any tests at all.

Those that had tests written are not even close to the test pyramid model.

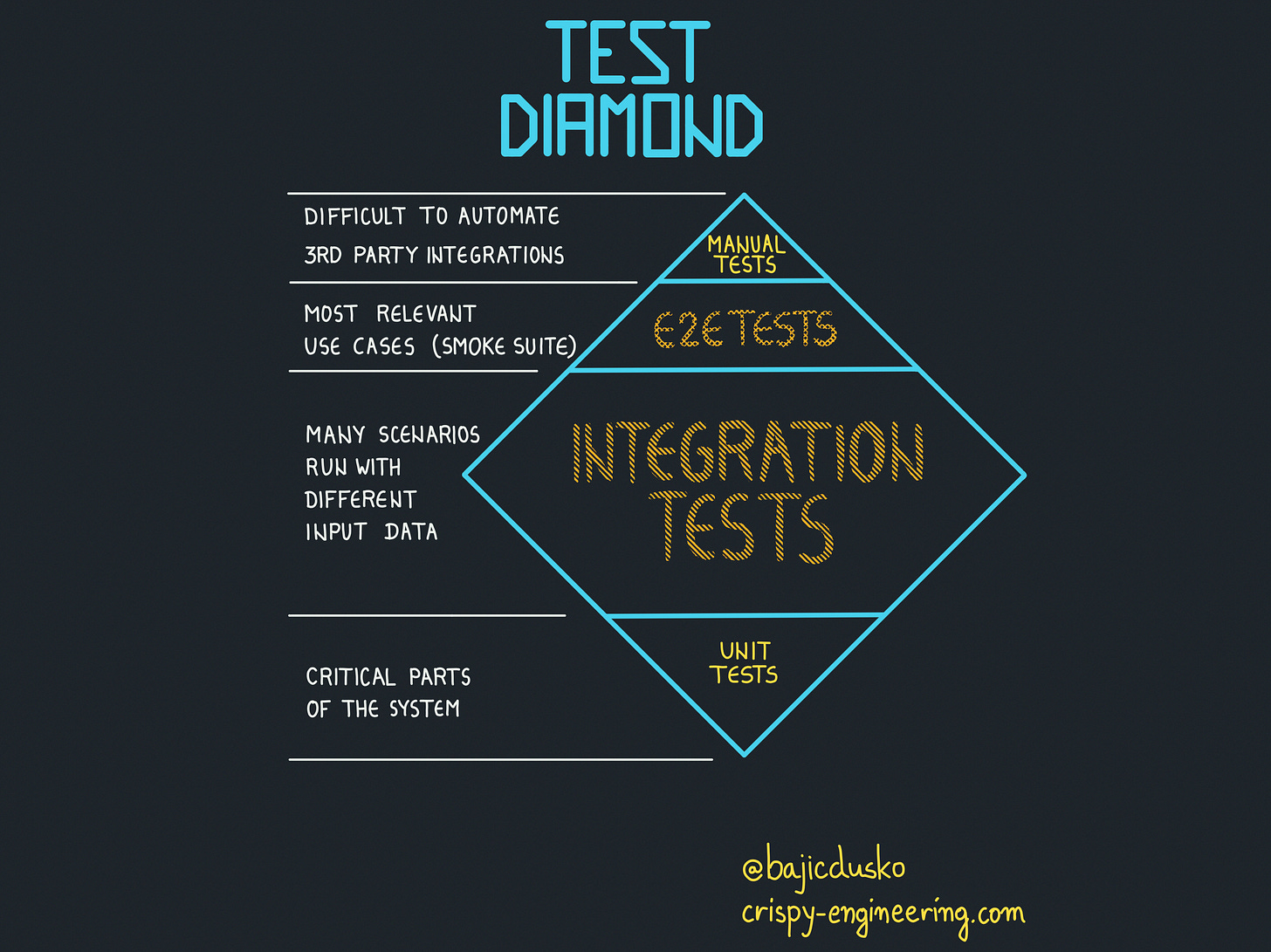

Test diamond

What I saw working in practice, that organically showed up is a test diamond model.

It consists of 4 major layers.

Unit tests

Integration tests

E2E tests

Manual tests

Unit tests

Unit tests are written for critical parts of the system. If it's an accounting app, we'll test specific accounting rules as units.

Or we might test a method that is parsing a string into a number with 6 decimals precision, rounding up.

Integration tests

The number of integration tests is the largest. We lean on its stability to guarantee the working product.

Integration tests usually start from an endpoint level (with the assumption that we're building an API-exposed service).

Some teams cover these tests through Postman as well.

Advantages are:

we're utilizing the whole call-stack vertical which helps in code coverage

by changing the input, we can affect a different vertical to be called.

we're testing the most likely scenario that is going to happen in the production

less time is spent on mocking everything and running the test in isolation

can be run in CI in the same way as unit tests.

Disadvantages are

it's slower to execute

test size is bigger

more effort is put into seeding the proper data for different scenarios

E2E tests

These are complete use cases from the user's perspective. We assume UI tests in this case. E2E tests are usually written in the scale-up phase of product development.

At this point, the company has enough money and enough users to start tinkering with the optimizations of the test process.

UI E2E tests are hard to maintain. They are very slow to execute and suffer from a high percentage of flakiness in their execution.

A huge blocker is the absence of knowledge in writing testing scenarios. These testing scenarios aren't focusing on implementation details but on user experience.

Manual tests

Not everything can be automated, and that's completely fine. There are countless cases where human needs to sit and click through the product.

Usually, this is the case with 3rd party integrations.

For example, you have to upload an invoice to 3rd party service and check through the UI dashboard of that service if the invoice is there.

Not avoidable, but should be reduced to a minimum. The fact is, in the MVP stages of product development, manual testing is absolutely dominant.

So why test diamond?

Because of efficiency in product delivery, while keeping the product stable.

By focusing on integration tests, we will cover the most used code paths. A minority of users will stumble upon the bug, fine, it's 2/10. Once that happens, we'll write a bug fix for it.

By now you must be wondering:

“What’s the catch? This isn’t a surprise for me.

Well, I want to tell, you, if your test setup looks more like a diamond, that’s fine. It works for others, it works for you, and everyone’s happy.

Often we’re judged and feel guilty because we’re not following some methodology by the book. It’s fine not to. Processes evolve.

“Bug-free software product doesn't exist, and it is important for a software product not to cease to exist.”

Unknown, but honest product owner

Dumbest thing I read in a long time tbh